Why Waiting Seconds Can Cost Millions Today

In today’s digital world, speed is not a luxury anymore – it’s a basic need. Whether it’s real-time data, AI applications, or smooth user experiences, businesses can’t afford delays. That’s why moving from a traditional data center to an edge data center has become so important.

Edge computing is changing how data is handled. Instead of sending everything to a faraway central server, businesses now process data closer to where it is created. This helps improve speed, reduce delays, and deliver faster results when it matters most.

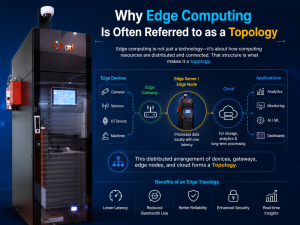

What is edge computing?

Edge computing is a technology where data is processed closer to where it is generated, such as devices, sensors, or local systems, instead of sending everything to a distant data center. This reduces delays and creates a low-latency network, which is critical for modern applications.

Real-world Edge Computing Examples

Based on the NPod use case, edge computing is already transforming industries like

- Telecom networks managing 5G traffic in real time

- Retail stores processing transactions instantly across locations

- Healthcare systems storing and accessing patient data locations

- Educational campuses enabling real-time learning

These edge computing example show how business are shifting toward localized processing.

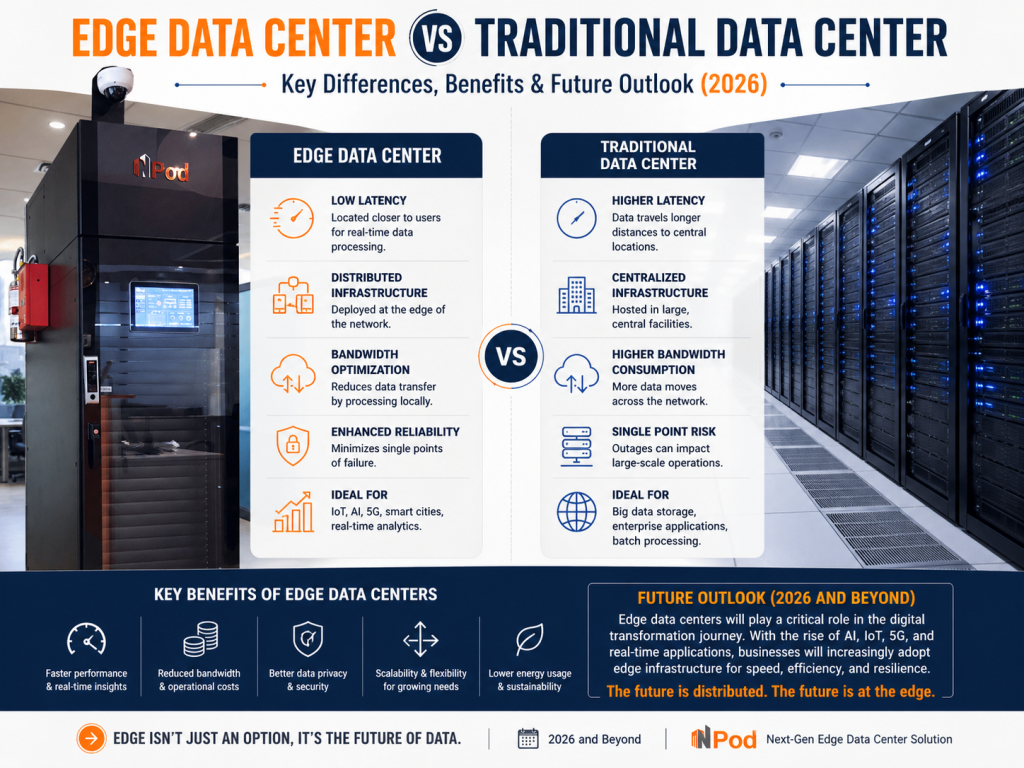

Traditional Data Center: Strong but Slower for Modern Needs

A traditional data center is a centralized facility where all computing, storage, and networking resources are located.

Key Characteristics:

- Large-scale infrastructure

- Centralized data processing

- High setup time and cost

- Requires dedicated physical space

The Problem Today

- Deployment can take months or even years

- High infrastructure and operational costs

- Increases latency due to distance from users

While still essential for storage and enterprise systems, traditional models struggle to meet real-time demands.

Edge Data Center: Built for Speed, Flexibility, and Real-Time performance

An edge data center is a compact, decentralized system designed to process data closer to users. NPod represents that concept perfectly.

What make NPod an Ideal Edge Data center

NPod micro data center is

- Compact and modular – can fit anywhere

- Rapidly deployable – ready in weeks instead of months

- Equipped with built-in cooling, UPS, and monitoring

- Designed for remote management and real-time operations

Unlike traditional setups, an edge data center like NPod is self-contained—meaning it includes power, cooling, security, and compute in one unit.

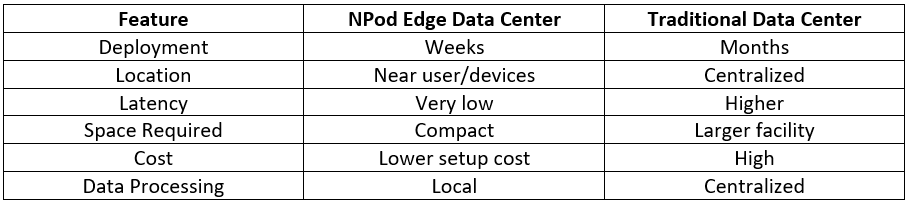

Edge Data Center Vs. Traditional Data Center: The Real Comparison

Here’s a clear comparison based on NPod Edge Data Center:

Edge Data Center Benefits That Businesses Can’t Ignore

Today’s businesses are moving toward edge data centers because they need speed, flexibility, and better control over their operations. Solutions like NPod are designed to bring computing closer to where data is created, helping organizations work faster and more efficiently without depending only on distant data centers.

- Real-Time Data Processing: With NPod, data is processed locally instead of faraway GPU servers. This reduces delays and allow businesses to get result almost instantly. It is especially useful for applications like AI, IoT, and real-time analytics where every second matters.

- Faster Deployment: Traditional data centers can take months to build and set up. NPod, on the other hand, is predesigned and ready to deploy, allowing businesses to go live in just days or weeks. This helps companies respond quickly to market demand and scale operations without long waiting times.

- Built-In Infrastructure: Each NPod unit comes with everything needed to run smoothly, including a cooling system, power backup (UPS), fire protection, and smart monitoring tool. This all-in-one design removes the need for complex installation and reduces setup effort significantly.

- Lower Cost & Less Complexity: By eliminating the need for large construction projects and reducing infrastructure requirements, NPod helps businesses save both time and money. It offers a simpler and more cost-effective way to build and manage IT infrastructure without compromising performance.

- Improved Reliability: NPod is built with an integrated monitoring and protection system that ensures stable performance and higher uptime. Businesses can rely on consistent operations, better system health, and fewer disruptions in their daily processes.

These practical benefits show why edge data centers are becoming a key part of modern digital infrastructure across industries.

Future Outlook 2026: Edge Is No Longer Optional

The future of IT infrastructure is no longer built around large, centralized systems. Instead, it is rapidly shifting toward a decentralized model where computing power is distributed closer to where data is generated. As digital transformation accelerates across industries, businesses are recognizing that traditional infrastructure alone cannot meet the demands of real-time applications, AI-driven insights, and always-on connectivity. In this evolving landscape, edge computing is becoming a critical foundation rather than an optional upgrade.

Key Trends Shaping the Future

- Rise of AI and IoT Workloads

The rapid growth of artificial intelligence and IoT devices is generating massive volumes of data at the source. Processing this data in real time is essential for applications such as predictive maintenance, smart manufacturing, and autonomous systems. Edge data centers enable faster, localized processing, reducing delays and improving decision-making accuracy. - Growth of Micro Data Centers

Traditional data center deployments often require significant time, space, and investment. In contrast, micro data centers like NPod offer a compact, modular, and preintegrated solution that can be deployed quickly. These systems are designed for flexibility, allowing businesses to scale infrastructure as needed without complex construction or long development cycles. - Increasing Demand for Instant Processing

Modern applications demand immediate response times. Whether it is real-time analytics, customer interactions, or automated systems, even slight delays can impact performance and user experience. Edge infrastructure supports low-latency operations, enabling businesses to process and act on data instantly. - Expansion of 5G and Connected Ecosystems

The rollout of 5G networks is accelerating the need for distributed infrastructure. With higher speed and lower latency, 5G enables advanced use cases such as smart cities, connected healthcare, and intelligent transportation. Edge data centers play a vital role in supporting these environments by handling data closer to the network edge. - Shift Toward Distributed Infrastructure Models

Organizations are moving away from a one-fits-all infrastructure approach. Instead, they are adopting hybrid and distributed models that combine centralized data centers with edge deployments. This allows for greater flexibility, improved performance, and better alignment with location-specific requirements.

Conclusion

The shift toward distributed infrastructure is already changing how businesses manage data and performance. Edge computing brings processing closer to users, which means faster response time and better efficiency. At the same time, traditional data centers still play an important role by handling large-scale storage and heavy workloads.

The future is not about choosing one over the other—it’s about using both together. Businesses that combine edge and traditional data centers will be better prepared for real-time needs, AI workloads, and the next phase of digital growth.